Hey, what’s up, everybody? In this article, I will discuss what latency is, how it is measured and how these metrics are important to you as a developer.

Before answering this question to the title, it is mandatory to understand the concept of latency.

Join our discord server for more such content

Table of Contents

The story

Feel free to skip to the main content, here I’m going to talk about how I got introduced to this topic.

So one fine day my team lead asked me to check the latencies of our services on the APM. As escalations had raised that some of our services cannot handle the load, the result being increase in latencies.

I was like: what are these latencies? Where do I find them?

After some google searches, I found my answer and shared the screenshot. Unlike any other engineer, my engineering brain was not satisfied with just sharing the data. Again, I did some google searches, read some articles, and figured out all about latency and p50 p90 p99 latency metrics.

But this process took some time, as the information I was looking for was scattered over the internet. Therefore, I decided to collate all this information into one blog post.

That’s all about my story. Let’s get into action now.

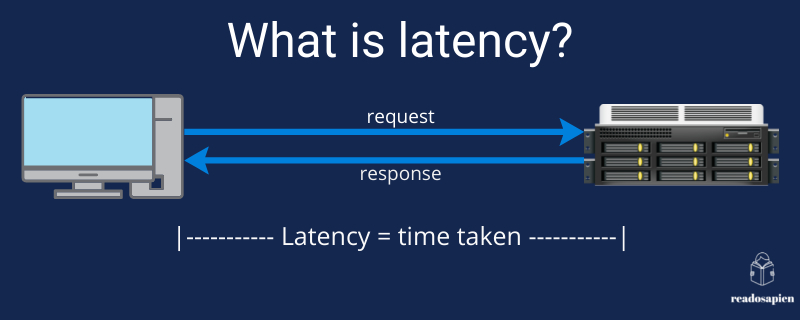

What is latency?

Latency is the total time taken for the data to reach its destination. This time is usually the round-trip time.

In simple words,

- the client requests a server.

- the server responds to the client.

Its interview season, and only technical skills won't be enough to crack interviews. Read our new article 'Behavioural Questions for Software Engineers' now

The most awaited answer

p50, p90, pxy are metrics to measure the latency of your services. The number here denotes the percentile of total requests.

p50 – The 50th latency percentile: 50% of the requests will be faster than the p50 value.

p90 – The 90th latency percentile: 90% of the requests will be faster than the p90 value.

Let’s take an example to simplify this further.

Say we have the following latencies in milliseconds:

50, 78, 25, 90, 102, 68.

Now arrange them in ascending order, and you get.

25, 50, 68, 78, 90, 102.

the p50 latency for this will be the next latency after skipping the first 50% of data i.e. 78.

Similarly, p90 = 102.

Also read, Blue-Green Deployment (A sure shot way to reduce downtime in deployment)

Let’s see if you got the example, comment down below the p75 for the above example.

What is the significance of p50 p90 p99 latency?

The lower the values of these metrics the better your service is performing. The order of precedence for these will be in descending order i.e. p99, p90, p50.

So the lower the values of p99, p90 the better your service / APIs are.

I would also recommend you to read Domain-Driven Design by Eric Evans to learn more about how systems are designed

References

https://medium.com/@djsmith42/how-to-metric-edafaf959fc7

https://stackoverflow.com/questions/12808934/what-is-p99-latency

Follow me on LinkedIn, and Twitter for more such backend engineering concepts.